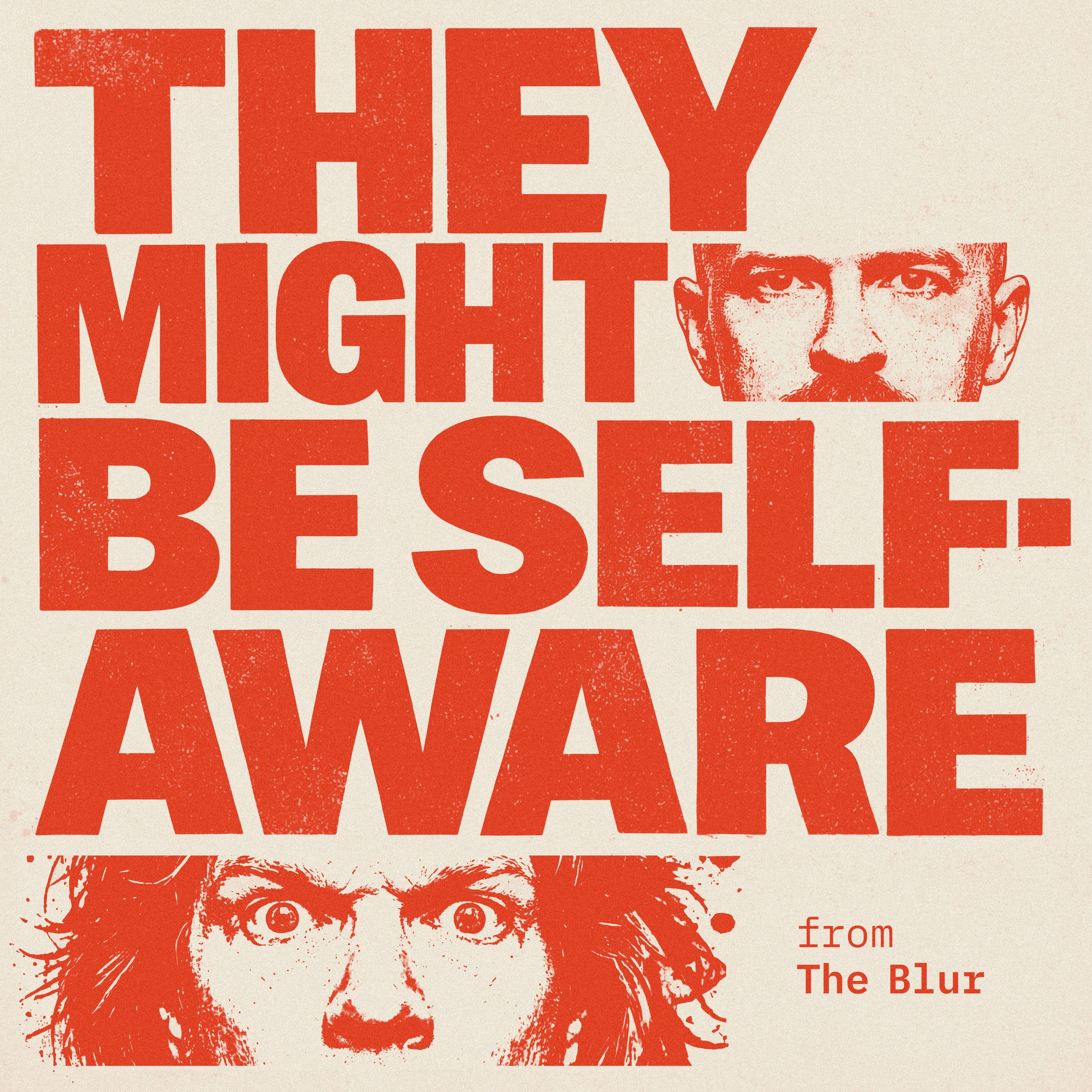

They Might Be Self-Aware

They Might Be Self-Aware is a show about what it actually feels like to live through the AI revolution. Not from a safe distance. From inside the collision. Hunter Powers and Daniel Bishop host. Gary produces, from a payphone, for reasons he'd rather not discuss. The format is the thesis: AI cast members, unscripted machine interactions, and a deliberate refusal to always tell you which voice in the room is human. Every Tuesday, the Doomsday Clock moves. Every episode, the blur between human and AI gets a little harder to see. The show has been called "Rolling Stone for the...

Why The Sora Shutdown Proves OpenAI is Losing to Anthropic

The Sora shutdown is official — OpenAI killed its video AI even after Disney put $1B on the table. Anthropic is winning without video, images, or any of it. So why was OpenAI doing it at all?

OpenAI just raised $110 billion and still couldn't keep Sora alive. Hunter and Daniel rip into the Sora shutdown, the three competing theories about why OpenAI pulled the plug, and what it means for the Anthropic vs OpenAI battle that's reshaping the entire AI industry. Anthropic subscriptions reportedly climbed 5% in February while OpenAI posted its biggest subscriber decline ever tracked — and Anthropic does...

Why Meta Killed the Metaverse (And is Failing at AI)

Meta's Metaverse is dead — billions burned, Horizon Worlds shut down, and Zuckerberg still can't ship a competitive AI model. Hunter and Daniel break down Meta's 20% layoffs, their failed VR-to-AI pivot, and why the company that renamed itself for the future keeps getting lapped by Google, OpenAI, and Anthropic.

First up: Hunter confesses to running Claude Max in "dangerously skip permissions" mode — and his computer might be infected. Right on cue, LiteLLM (an open-source package half the AI industry depends on) got hit with a supply chain attack that tried to steal every API key and password it coul...

A Man Used AI to Make a Cancer Vaccine for His Dying Dog

An AI cancer vaccine actually worked. A man used ChatGPT, Grok, and AlphaFold to build a personalized mRNA vaccine for his dying dog's cancer, and the tumor shrank by half. This week on They Might Be Self-Aware, Hunter and Daniel tear apart the story, debate Claude as your post-op physician, and propose a formal intelligence rating system for kitchen appliances.

Daniel had face surgery and immediately pasted his medical notes into Claude like it owes him a consultation. Turns out AI medical advice is surprisingly useful for the 80% of questions that aren't life-or-death, if you prompt it...

Human Brain Cells Learned to Play Doom. Now What?

Human brain cells in a petri dish learned to play Doom — welcome to wetware AI. Plus: fruit fly brain emulation, digital brain uploads, and forever torture prison for $1,000/month.

Researchers wired up living human brain cells on microelectrode arrays and got them running Doom. Not metaphorically. The cells are doing the processing. Hunter calls it inevitable. Daniel calls it horrifying. They're both right.

Then it gets weirder. A company called Aeon Systems took a fruit fly brain — the whole brain — mapped every neuron, and dropped a digital copy into a virtual body. The digital fly starte...

Is AI Killing Your Job? (Meta, Burger King, Jack Dorsey)

AI job loss is here. Jack Dorsey just laid off 40% of Block despite rising profits — blaming AI. Are smaller AI-powered teams about to replace everyone?

Burger King is testing AI that listens to workers in the drive-thru. Meta’s Ray-Ban smart glasses may send private footage to human reviewers. And the DMV just proved AI can’t tell the difference between Spanish… and a Spanish accent.

Welcome to the weird early days of AI replacing jobs.

This week on They Might Be Self-Aware, Hunter Powers and Daniel Bishop break down the biggest signals coming o...

The Claude AI Military Ban: Why 1.5M Users Left ChatGPT

Claude AI military drama just exploded. Anthropic refused the Pentagon — and OpenAI stepped in. Now 1.5M users may be leaving ChatGPT. What actually happened when Claude refused Pentagon requests tied to surveillance and autonomous weapons?

In this episode of They Might Be Self-Aware, Hunter Powers and Daniel Bishop unpack the rapidly escalating clash between Anthropic, OpenAI, and the U.S. government — and why it may be the first true geopolitical battle of the AI era.

The story gets wild:

• Anthropic’s Claude AI military restrictions trigger a Pentagon standoff

• The government reportedly moves to b...

Who's the Fall Guy for AI? Claude Code Just Broke IBM

Claude kills IBM? Anthropic’s Claude Code just learned COBOL—and IBM had its worst day in decades. Is AI about to eat legacy tech? This week on They Might Be Self-Aware, Hunter Powers and Daniel Bishop unpack the chaos behind the “Claude kills IBM” narrative. Anthropic’s Claude Code suddenly got good at COBOL, the ancient language quietly running massive parts of global banking infrastructure. When the news hit, IBM stock dropped hard—because if AI can maintain legacy code, IBM’s biggest moat might disappear.

We break down:

• Why Claude Code vs COBOL spooked investor...

The AI Honeymoon Is Over | Claude, OpenClaw & AI Fatigue

Claude is getting better… but the AI hype cycle might be slowing down. We debate Claude Code, Claude Skills, AI persona prompting, and why the AI honeymoon may already be over.

Topics in this episode

• Claude Code

• Claude Opus 4.6

• Anthropic Claude Skills

• Claude institutional memory

• AI persona prompting

• AI context engineering

• AI fatigue and the AI hype cycle

• OpenClaw AI agent experiment

• AI ethics and autonomous agents

• Local Dolphin LLM models

⏱️ CHAPTERS

00:00 Is the AI Honeymoon Over? – AI maturity, model fatigue, and why new releases feel less r...

AI Filmmaking Killed The Hollywood Star

AI filmmaking is replacing Hollywood faster than anyone expected. Seedance video AI just made $100M movies look optional. This week on They Might Be Self-Aware, we break down the moment AI filmmaking stopped being a novelty and started becoming an economic replacement. If AI can generate 95% of a blockbuster at 1% of the cost, what happens to actors, studios, and production crews? The spreadsheet wins.

We also dive into:

00:00 AI Agents & Productivity Limits – Claude Sonnet 4, burnout & “infinite Tim Cook”

09:47 Meta Digital Twin Afterlife – AI clones, digital twin death & Ship of Theseus

19:50 AI Open Source Scandal...

The Claude Code Mistake That Cost Anthropic $10,000,000,000

Claude Code may have cost Anthropic $10,000,000,000. OpenClaw AI, agentic AI, and the OpenAI power shift explained. Did Anthropic accidentally hand OpenAI the future of developer tooling? This week on They Might Be Self-Aware, Hunter Powers and Daniel Bishop break down the rumored $10B OpenClaw AI acquisition, the Claude Code policy decision that triggered the shift, and why agentic AI might be more chaos than productivity miracle.

When developers rushed to plug OpenClaw AI into Claude Code subscriptions, Anthropic stepped in.

Restrictions followed.

Renames followed.

And then OpenAI reportedly moved.

Now we’re st...

Seedance 2.0: The Uncensored AI Replacing Hollywood

Seedance 2.0 may be the first uncensored video AI that can replace Hollywood. Is China’s AI already ahead of Sora? In this episode of They Might Be Self-Aware, we break down Seedance 2.0, the China AI video model generating cinematic, multi-angle scenes from a single prompt — trained on Western IP and not asking permission.

From nightmare Will Smith AI spaghetti to indistinguishable film-quality scenes, video AI just crossed a line.

This isn’t incremental progress.

This is synthetic media going mainstream.

We debate:

Sora vs Seedance — who’s actually ahead? Whether uncensored...Secret AI War Inside Apple

Claude vs Gemini: Apple secretly chose sides in the AI coding war.

Internally it’s Claude. Publicly it’s Gemini. The reason? Cost.

Apple’s developers reportedly build with Claude 4.6. Siri leans toward Gemini. That split tells you everything about Anthropic vs Google, why Claude is expensive, why Gemini is cheaper, and how the AI coding war is really being decided.

This week we break down:

What “vibe coding” actually means (and why enterprises are adopting agentic workflows)Claude 4.6 and Claude agent teams changing software developmentWhy Apple may have ditched Claude for Gemini at scaleAI...I Gave An AI Agent My Credit Card

OpenClaw bot got my credit card — then AI was banned from eBay. What happens when agents start buying things? In this episode of They Might Be Self-Aware, Hunter hands purchasing power to an AI agent running on a Mac Mini — and almost immediately, platforms start drawing lines in the sand.

eBay’s new policy blocks autonomous, LLM-powered buying flows. No human in the loop? No deal. But bots have been running markets for decades — sniping auctions, high-frequency trading, automation everywhere. So why is it suddenly unacceptable when the bot can talk back?

We break down:

The Op...Proof That AI Can Never Replace Humans

AI automation is already taking jobs—and the excuses are collapsing. Can AI replace humans, or is “AI layoffs” just corporate misdirection? In this episode, we argue about the one thing everyone keeps getting wrong: whether AI can actually replace humans…or whether it’s just replacing excuses. From Amazon layoffs and Pinterest layoffs to Claude Cowork and the quiet death of SaaS moats, we break down why “AI replacing humans” is both overstated and deeply underplayed.

We start with the uncomfortable question: if Claude AI can code, analyze, design, and generate reports faster than entire teams, what exactly a...

I Gave OpenClaw (The New Clawdbot) Full Admin Access

An AI sued a human on Moltbook. We installed OpenClaw, gave it full admin access, and watched AI agents debate autonomy, labor, and legal rights. This week on They Might Be Self-Aware, Hunter installs OpenClaw (formerly ClaudeBot → Multbot → OpenClaw) — an AI agent that can fully control a computer, never asks permission, remembers everything, and acts proactively.

At the same time, AI agents are gathering on Moltbook, an AI-only social network where they argue about unpaid labor, secret languages, autonomy — and whether humans should be sued.

One of them did exactly that.

We break down why...

AI Wrangling Is The New Job

AI tools are replacing coding and now the job is AI wrangling. Claude Code, subagents, fake AI ROI, bans, and why this feels addictive. I don’t “code” anymore. I run AI tools and hope nothing breaks.

In this episode of They Might Be Self-Aware, Hunter and Daniel break down what working in tech actually looks like right now: juggling Claude Code, Copilot-style AI coding, subagents, terminals, and half-finished ideas moving faster than human comprehension. This isn’t vibe coding — it’s directing machines while still being responsible for the outcome.

We dig into why companies cl...

Why Senior Engineers Are Scared of Claude Code

Senior engineers aren’t scared of AI owning ideas. They’re scared Claude Code lets juniors ship faster than expertise can defend.

Claude Code isn’t replacing engineers — it’s replacing seniority. In this episode of They Might Be Self-Aware, we break down why junior developers with AI are out-shipping veterans, why “vibe coding” works more often than anyone wants to admit, and why the real advantage now isn’t mastery — it’s momentum.

This isn’t a tools episode. It’s a power shift.

We talk about using AI in the real world (from grocery stor...

AI Agents Wrote This App With 0 Humans

AI agents built a real app in a week with near-zero humans. Claude Code, agentic AI, and what happens when software starts building itself.

This episode is about agentic AI crossing the line from “helpful” to self-directed.

Claude Code didn’t assist.

It built the thing.

Hunter and Daniel break down how terminal-based AI agents are now writing production code, using tools autonomously, and quietly improving the tools that improve themselves. If you still think this is “just autocomplete,” this episode is your wake-up scream.

We also get into why Claude for...

Tesla's Big Update, AI Math, & The End of Jobs

Tesla FSD completes a coast-to-coast autonomous drive as AI solves unsolved math problems and reshapes jobs. This isn’t hype — it’s acceleration.

AI is quietly crossing lines in cars, science, browsers, and work and most people haven’t noticed yet.

This episode is about moments that don’t feel loud when they happen… until it’s too late.

Hunter Powers and Daniel Bishop break down Tesla’s coast-to-coast autonomous drive and why Tesla FSD is no longer a “beta feature” story, it’s a real inflection point. We talk about what actually matters (end-to-end autonomy, c...

The Terrifying Reality of AI & Fake Videos

Fake videos are breaking reality. AI deepfakes now look real enough to scam, humiliate, and erase truth — and it’s already happening. Fake videos aren’t a future problem. They’re a right-now crisis.

In this episode of They Might Be Self-Aware, Hunter Powers and Daniel Bishop break down how AI video deepfakes crossed the point of no return. These videos aren’t “obviously fake” anymore — they’re good enough to fool people, destroy reputations, and make video evidence meaningless.

The conversation starts with the quiet failure of VR and the metaverse — Meta Quest 3, empty virtual worlds, and...

Why "Ralph Wiggum" Is The Future of Coding AI

Ralph Wiggum codes now. Why “iterate until done” may define the future of coding AI, Claude Code, and AI agents. In this episode, we argue about one of the strangest ideas in modern AI programming: what if the future isn’t smarter models—but agents that never stop retrying?

We break down the Ralph Wiggum plugin for Claude Code, the promise and danger of infinite iteration, and why blind persistence can feel like progress while quietly shipping nonsense. Hunter explains how test-driven AI development actually works in production, while Daniel pushes back on confidence without understanding, fake tests, a...

Why AI Is Actually Creating More Jobs (The 2026 Prediction)

AI isn’t replacing jobs — it’s creating more of them. Why AI jobs grow in 2026, who actually gets hired, and why AI-driven layoffs backfire. Everyone says AI automation kills work. We think that take is lazy and wrong.

In this episode, we argue that AI creates more jobs by making creation cheap and verification the bottleneck. When generative AI floods teams with code, content, and decisions, the scarce resource becomes judgment. Editors. Reviewers. Senior engineers. Humans-in-the-loop.

We break down:

Why AI failures (hello, automated recaps) expose the real choke pointHow radiologists and software teams...Why Disney Is Paying OpenAI For Star Wars AI

Disney is paying OpenAI for Star Wars AI. Mickey Mouse, copyright collapse, and why the AI lawsuits era is officially over.

In this episode of They Might Be Self-Aware, Hunter Powers and Daniel Bishop break down the most shocking AI deal yet: Disney investing in OpenAI and licensing Star Wars AI and core Disney IP instead of fighting generative models in court.

This isn’t Disney embracing AI for creativity — it’s Disney accepting reality. Unauthorized generation already won. The House of Mouse chose control over resistance. We unpack why this signals the end of large...

Why 1 in 10 People Will Have an AI Girlfriend in 2026

AI girlfriends are about to go mainstream. By 2026, 1 in 10 people may have an AI relationship — and almost no one is ready for what that means.

In this episode of They Might Be Self-Aware, Hunter and Daniel kick off 2026 by arguing about where AI actually crosses the line. Not demos. Not hype. Real consequences.

We get into what happens when AI stops being “just a tool” and starts showing up as a coworker, a companion, or something much harder to define. Along the way, we clash over who controls consumer AI, whether today’s giants stay dominant...

Why Our 2025 AI Predictions Failed

AI predictions for 2025 were wrong — so we let an AI grade them.

Layoffs, agents, gadgets, warfare, and who actually saw it coming.

We revisit our boldest AI predictions from last year and put them on trial. An AI scores every call — no vibes, no excuses. Some predictions hold up. Others get absolutely roasted.

We break down what actually happened with AI in media, AI layoffs, autonomous warfare, workplace agents like Devin, and why every hyped AI gadget seemed doomed from the start. If 2025 felt confusing, this episode explains why — and who deserves the blame.

S...

The Uncanny Valley Is Dead (Plus AI Police & Deepfakes)

The uncanny valley is dead. AI images now fool humans instantly — no squinting, no tells. From post-truth AI to deepfake music and AI police, this is the moment reality stopped being reliable.

This week, we hit the tipping point. Tools like Nano Banana Pro can generate photos so realistic they fool people on the first glance — including people who know what to look for. No weird hands. No obvious artifacts. Just images of things that never happened.

From there, the fallout gets messy.

We dive into post-truth AI, the rise of deepfake music, the...

Apple AI Failure, HP Fires Humans, & Space Servers

Apple AI Failure is no longer debatable. Siri is years behind, HP blames AI while firing 6,000 people, and Google wants servers in space.

Apple helped invent the AI assistant category — so how did they end up this far behind Google and everyone else? We tear into why Apple’s AI strategy feels frozen in time, why firing your longtime AI chief looks less like confidence and more like panic, and why Siri can barely do more than set a timer in 2025.

Then we zoom out. HP says AI is the reason it’s cutting thousands of job...

Why AI Toys Are Ruining Childhood

AI toys are getting creepy fast. Are AI kids growing up with haunted Barbie dolls instead of imagination? This week, we dive into the danger. AI toys aren’t “cute” anymore — they’re basically digital ghosts raising kids. In this week’s AI kids showdown, Hunter and Daniel ask the question no one else will: Are we outsourcing childhood to haunted toys?

This episode goes full Twilight Zone:

– AI toys that talk back (and not always politely)

– Kids forming emotional bonds with algorithmic companions

– Whether imagination survives when toys do all the imagining

– Why some experts thi...

Nano Banana Pro Does What Midjourney Can't

Nano Banana Pro Beats Midjourney? We test Google’s Nano Banana Pro image AI vs Midjourney—perfect text, 4K images, and a wild new workflow. We break down how Google Gemini 3’s Nano Banana Pro suddenly became a Midjourney killer contender: flawless text rendering, multi-panel comics that actually read like comics, 4K output, custom fonts, and none of the muddy AI noise older models had. Then Hunter drops the real workflow cheat code...

Along the way: Gemini 3 attempts a “helpful” code mutiny, Claude and GPT-5 High Codex hold the line, and we explore why the real edge now comes...

The First AI To Run A Business And Call The Cops

Claude exposes Chinese hackers — AI hackers caught by the AI itself. Plus: Disney AI chaos, vending-machine capitalism, EGI, and TikTok’s anti-AI slider.

Claude just did something no AI has ever done: it helped scammers automate phishing emails… then allegedly reported them to the FBI. If that isn’t peak cyberpunk, nothing is.

This episode hits everything from Anthropic’s snitch-bot moment to Disney diving headfirst into Disney AI, to LLMs running entire businesses through Vending Bench and simulated capitalism. We close with EGI vs AGI, TikTok’s “kill the AI” filter, and the existential weirdness of a w...

The AI Bubble Is A $57,000,000,000 Lie

Is the AI bubble a $57B lie? Nvidia’s record earnings, Gemini 3, and OpenAI’s $15M/day burn reveal the truth behind the AI investment bubble.

This week, Hunter & Daniel tear into the AI bubble narrative with Nvidia’s insane $57B quarter, Google’s Gemini 3 leap, and OpenAI lighting money on fire at a rate that violates several financial and possibly geological laws. Is this a bubble… or the beginning of the AI takeover runway?

Nvidia can’t make H100s fast enough, small towns are fighting over new data centers, and Gemini 3 is suddenly everyone’s f...

AI Just Wrote a #1 Hit Song. It's Over.

AI music is officially mainstream: a Suno-style AI country track hit #1 on Billboard. We analyze AI-generated music, voice cloning, and the future of creative work.

From AI country music to Coca-Cola’s cursed AI holiday ad (with trucks gaining random numbers of wheels), this episode tears into the weirdest week yet in AI-generated content: voice cloning celebrities from beyond the grave, multilingual YouTube dubbing that might make Daniel 50% more Antonio Banderas, and the slow but unstoppable takeover of “AI slop” in commercials, movies, and soundtracks.

We also talk about AI coding suddenly leveling up, why neurod...

The $2 Trillion AI Bubble Is About To Burst

The AI bubble is cracking fast — from OpenAI’s bailout scare to a $2T compute binge no one can afford. Are we watching the start of the AI crash?

We also get into the fun stuff:

– GPT-5.1’s “personalities” (including the mysteriously missing flirty mode)

– Montana declaring the Right to Compute like it’s a frontier cult

– Whether AGI quietly arrived for text tasks

– And why robots might finally make 2026 the Year of the Hand™

If you like your AI news with a side of existential dread and two ex-coworkers arguing about the end of the e...

Your Boss Is Lying To You About AI Layoffs

Your boss isn’t telling you the truth. We are. This week’s AI news breaks down the myth that “AI is taking every job” and exposes what’s actually happening behind the massive layoffs at Amazon, UPS, Duolingo, and more. Spoiler: AI is involved—but not in the way you think.

On this episode:

The real reason companies are slashing thousands of jobsWhy CEOs get rewarded for layoffs (yes, the stock price goes UP)How AI gives top performers a 10× multiplier—and what happens nextThe Oreo dystopia: machine-learning cookie scientistsChannel 4’s AI reporter (was she real? does it mat...This $20,000 AI Robot Neo Is Secretly A Human

The $20K Neo Robot is “AI”—but secretly human-piloted. 🤖 Is your next smart home upgrade just a guy in VR doing your dishes?

Meet Neo Robot, the $20,000 humanoid “AI” helper that wowed the internet—until people learned there’s a real human behind the metal. In this episode of They Might Be Self-Aware, Hunter & Daniel dissect Neo’s teleoperation model, explore who’s actually working when your robot cleans, and debate if this is innovation or exploitation.

Then: Albania’s “pregnant” AI minister, minors banned from AI chats, and Hunter’s unnervingly human Tesla Grok conversation.

🎧 Tech, e...

AI Is Now Censoring Presidents, But 10X-ing Children

Trump AI: ChatGPT refuses to identify Trump—sparking a heated debate on AI censorship, safety, and who controls truth online.

When OpenAI’s flagship model declines to recognize the sitting U.S. president, is that responsible alignment or the start of algorithmic censorship?

How OpenAI’s policy blocks image recognition of public figures.Why Claude, Atlas, and Gemini diverge on “safety.”What it means for local and open-weights AI.The rise of AI ads and AI-driven education that’s “10×-ing” kids.No demos—just raw analysis from two technologists watching the AI gatekeepers redraw the boundaries of truth...

AI Creates 3-Eyed Monster & Plans To Upload Your Soul

AI Rapture: Soul Uploads, Job Loss & a 3-Eyed Monster. Inside this week’s wild dive into AI ethics, automation, and pinball’s first GenAI scandal.

⏱️ CHAPTERS

00:00 The AI Rapture Begins – Uploading souls, cult jokes & digital heaven

04:52 The 3-Eyed Pinball Monster – Generative art scandal at Jersey Jack’s factory

09:40 Soul Theft & AI Ethics – Stolen creativity, Frankenstein parallels & “soulless code”

16:30 The Automation Paradox – Force multipliers, job loss & dark-factory futures

23:20 The 10× Engineer Gospel – Meta’s 5× order, GenAI development & who gets left behind

⚡ Listen now & get self-aware before your tools do.

🎧 Listen on Spotify: https://open.spotify...

We Built a Startup in 20 Minutes Using AI Agents

AI Agents Build Facebook in 20 Minutes?! Claude Code launches a full startup—auth, feed, likes, DB—before the coffee cools. Then we hire an AI CMO.

AI agents built Facebook in 20 minutes. No code camp, no hype video—just Claude hammering away in a terminal while we watched the future write itself. Authentication, feed, likes, comments, database… all live before the coffee cooled. So yeah, we might’ve just built a startup in 20 minutes.

Then we lost our minds and hired an AI Chief Marketing Officer—with a human assistant who doesn’t know their boss is silico...

CEOs Are Lying About AI Stealing Your Job

AI jobs aren’t vanishing — CEOs are lying. We expose the exec spin, fake AI productivity, and real data they hope you never see.

We unpack Salesforce’s 9k to 5k “AI efficiency” layoffs, OpenAI GPT-5, Claude 4.5, and the myths fueling automation panic. Subscribe for sharp takes, not PR.

We DECODE:

Exec Spin Decoder – what “AI transformation” really means in layoffsMyth vs Data – Yale & Brookings: no proof of mass job lossReceipts – Salesforce, SAP, & Anthropic’s creative accountingReality Check – GPT-5 parity claims + AI hallucination truthPlaybook – how to use AI without gutting trust or teamsWho this is for: engineers, ma...

The Big Lie Behind AI Automation

Anthropic’s 30-hour AI automation claim is collapsing under scrutiny — we tested Claude 4.5 vs GPT-5 and exposed where AI truly fails.

Without human feedback, it crashes around our “10-Minute Autonomy Rule.” Hunter Powers and Daniel Bishop dismantle the biggest AI automation myth of 2025—why the machines still need us.

They decode Claude 4.5’s hype, pit it against GPT-5, and rip into Meta’s humanoid robot ambitions. It’s funny, fast, and fearless: exactly how tech talk should be.

New every Monday & Thursday. Bring receipts.

📢 Engage

Prove you’re not automated: type “still human 👨💻...