Latent Space: The AI Engineer Podcast

The podcast by and for AI Engineers! In 2025, over 10 million readers and listeners came to Latent Space to hear about news, papers and interviews in Software 3.0. We cover Foundation Models changing every domain in Code Generation, Multimodality, AI Agents, GPU Infra and more, directly from the founders, builders, and thinkers involved in pushing the cutting edge. Striving to give you both the definitive take on the Current Thing down to the first introduction to the tech you'll be using in the next 3 months! We break news and exclusive interviews from OpenAI, Anthropic, Gemini, Meta (Soumith Chintala), Sierra (Bret Taylor), tiny...

Extreme Harness Engineering for Token Billionaires: 1M LOC, 1B toks/day, 0% human code, 0% human review — Ryan Lopopolo, OpenAI Frontier & Symphony

We’re proud to release this ahead of Ryan’s keynote at AIE Europe. Hit the bell, get notified when it is live! Attendees: come prepped for Ryan’s AMA with Vibhu after.

Move over, context engineering. Now it’s time for Harness engineering and the age of the token billionaires.

Ryan Lopopolo of OpenAI is leading that charge, recently publishing a lengthy essay on Harness Eng that has become the talk of the town:

In it, Ryan peeled back the curtains on how the recently announced OpenAI Frontier team have become OpenAI’s top Code...

Marc Andreessen introspects on The Death of the Browser, Pi + OpenClaw, and Why "This Time Is Different"

Fresh off raising a monster $15B, Marc Andreessen has lived through multiple computing platform shifts firsthand, from Mosaic and Netscape to cofounding A16z.

In this episode, Marc joins swyx and Alessio in a16z’s legendary Sand Hill Road office to argue that AI is not just another hype cycle, but the payoff of an “80-year overnight success”: from neural nets and expert systems to transformers, reasoning models, coding, agents, and recursive self-improvement. He lays out why he thinks this moment is different, why AI is finally escaping the old boom-bust pattern, and why the real bottle...

Moonlake: Causal World Models should be Multimodal, Interactive, and Efficient — with Chris Manning and Fan-yun Sun

We’ve been on a bit of a mini World Models series over the last quarter: from introducing the topic with Yi Tay, to exploring Marble with World Labs’ Fei-Fei Li and Justin Johnson, to previewing World Models learned from massive gaming datasets with General Intuition’s Pim de Witte (who has now written down their approach to World Models with Not Boring), to discussing the Cosmos World Model with with Andrew White of Edison Scientific on our new Science pod, to writing up our own theses on Adversarial World Models. Meanwhile Nvidia, Waymo and Tesla have published their own ap...

Mistral: Voxtral TTS, Forge, Leanstral, & what's next for Mistral 4 — w/ Pavan Kumar Reddy & Guillaume Lample

Mistral has been on an absolute tear - with frequent successful model launches it is easy to forget that they raised the largest European AI round in history last year. We were long overdue for a Mistral episode, and we were very fortunate to work with Sophia and Howard to catch up with Pavan (Voxtral lead) and Guillaume (Chief Scientist, Co-founder) on the occasion of this week’s Voxtral TTS launch:

Mistral can’t directly say it, but the benchmarks do imply, that this is basically an open-weights ElevenLabs-level TTS model (Technically, it is a 4B Ministral base...

🔬Why There Is No "AlphaFold for Materials" — AI for Materials Discovery with Heather Kulik

Materials science is the unsung hero of the science world. Behind every physical product you interact was decades of research into getting the properties of materials just right. Your gym clothes contain synthetic fibers developed over decades. The glass screen, diodes, and chip substrate technology needed to read this blog post were only viable due to many teams of material scientists.

Our guest Prof. Heather Kulik was one of the first material scientists to realize that there was alpha in combining computational tools with data driven modeling — she did AI for science before it was cool. She ha...

Dreamer: the Personal Agent OS — David Singleton

Mar 23 update for Latent Spacenauts: this episode was recorded before the Dreamer team announced they were joining Meta Superintelligence Labs, and it turned out to be the last interview they did before the news became public. Consider this a snapshot from just before the transition!

In 2024, David Singleton left Stripe and joined forces with Hugo Barra for a buzzy stealth startup named /dev/agents. This month they emerged out as Dreamer, a consumer-first platform to discover, build, and use AI agents and agentic apps, centered on a personal “Sidekick” that helps users customize experiences via natural language.

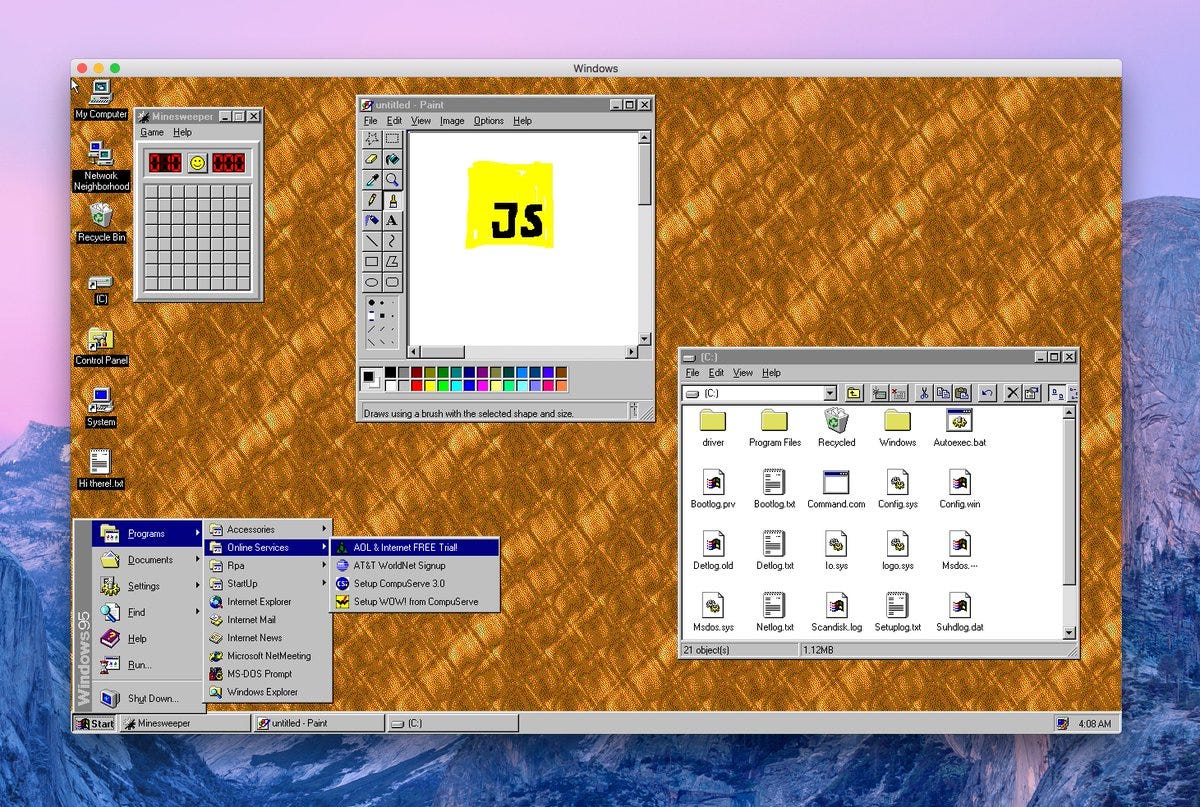

Why Anthropic Thinks AI Should Have Its Own Computer — Felix Rieseberg of Claude Cowork & Claude Code Desktop

Claude Cowork came out of an accident.

Felix and the Anthropic team noticed something interesting with Claude Code: many users were using it primarily for all kinds of messy knowledge work instead of coding. Even technical builders would use it for lots of non-technical work.

Even more shocking, Claude cowork wrote itself. With a team of humans simply orchestrating multiple claude code instances, the tool was ready after a brief week and a half.

This isn’t Felix’s first rodeo with impactful and playful desktop apps. He’s helped ship the Slack deskto...

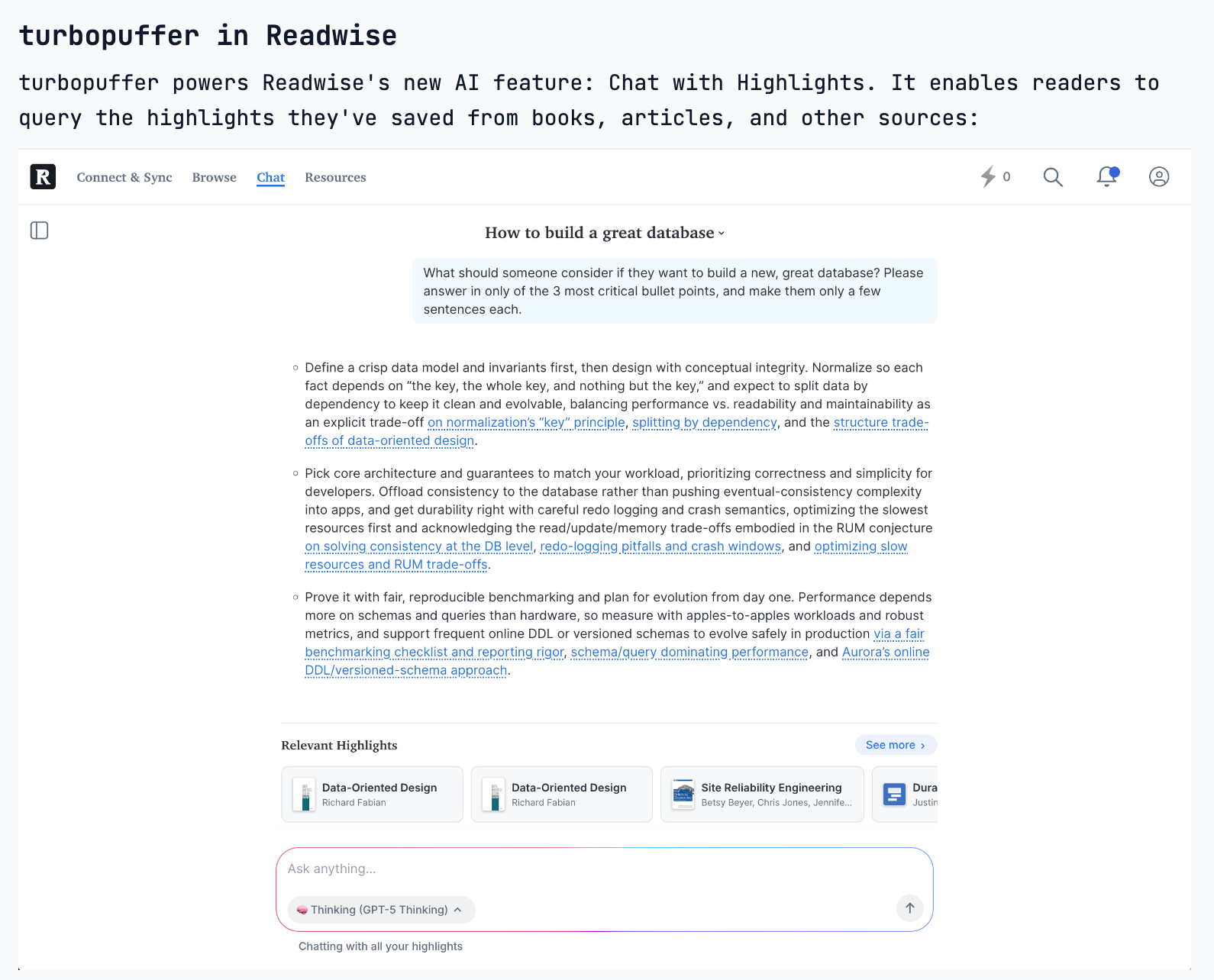

Retrieval After RAG: Hybrid Search, Agents, and Database Design — Simon Hørup Eskildsen of Turbopuffer

Turbopuffer came out of a reading app.

In 2022, Simon was helping his friends at Readwise scale their infra for a highly requested feature: article recommendations and semantic search. Readwise was paying ~$5k/month for their relational database and vector search would cost ~$20k/month making the feature too expensive to ship. In 2023 after mulling over the problem from Readwise, Simon decided he wanted to “build a search engine” which became Turbopuffer.

We discuss:• Simon’s path: Denmark → Shopify infra for nearly a decade → “angel engineering” across startups like Readwise, Replicate, and Causal → turbopuffer almost accidentally becoming a company...

NVIDIA's AI Engineers: Agent Inference at Planetary Scale and "Speed of Light" — Nader Khalil (Brev), Kyle Kranen (Dynamo)

Join Kyle, Nader, Vibhu, and swyx live at NVIDIA GTC next week!

Now that AIE Europe tix are ~sold out, our attention turns to Miami and World’s Fair!

The definitive AI Accelerator chip company has more than 10xed this AI Summer:

And is now a $4.4 trillion megacorp… that is somehow still moving like a startup. We are blessed to have a unique relationship with our first ever NVIDIA guests: Kyle Kranen who gave a great inference keynote at the first World’s Fair and is one of the leading architects of NVIDIA Dynamo...

Cursor's Third Era: Cloud Agents

All speakers are announced at AIE EU, schedule coming soon. Join us there or in Miami with the renowned organizers of React Miami! Singapore CFP also open!

We’ve called this out a few times over in AINews, but the overwhelming consensus in the Valley is that “the IDE is Dead”. In November it was just a gut feeling, but now we actually have data: even at the canonical “VSCode Fork” company, people are officially using more agents than tab autocomplete (the first wave of AI coding):

Cursor has launched cloud agents for a few months now...

Every Agent Needs a Box — Aaron Levie, Box

The reception to our recent post on Code Reviews has been strong. Catch up!

Amid a maelstrom of discussion on whether or not AI is killing SaaS, one of the top publicly listed SaaS companies in the world has just reported record revenues, clearing well over $1.1B in ARR for the first time with a 28% margin. As we comment on the pod, Aaron Levie is the rare public company CEO equally at home in both worlds of Silicon Valley and Wall Street/Main Street, by day helping 70% of the Fortune 500 with their Enterprise Advanced Suite, and yet...

METR’s Joel Becker on exponential Time Horizon Evals, Threat Models, and the Limits of AI Productivity

This is a free preview of a paid episode. To hear more, visit www.latent.space

AIE Europe CFP and AIE World’s Fair paper submissions for CAIS peer review are due TODAY - do not delay! Last call ever.

We’re excited to welcome METR for their first LS Pod, hopefully the first of many:

METR are keepers of currently the single most infamous chart in AI:

But every Latent Space reader should be sophisticated enough to know that the details matter and that hype and hyperbole go hand in hand...

[LIVE] Anthropic Distillation & How Models Cheat (SWE-Bench Dead) | Nathan Lambert & Sebastian Raschka

Swyx joined SAIL! Thank you SAIL Media, Prof. Tom Yeh, 8Lee, Hamid Bagheri, c9n, and many others for tuning into SAIL Live #6 with Nathan Lambert and Sebastian Raschka, PhD. Sharing here for the LS paid subscribers.

We covered:

This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit www.latent.space/subscribe

🔬Searching the Space of All Possible Materials — Prof. Max Welling, CuspAI

Editor’s note: CuspAI raised a $100m Series A in September and is rumored to have reached a unicorn valuation. They have all-star advisors from Geoff Hinton to Yann Lecun and team of deep domain experts to tackle this next frontier in AI applications.

In this episode, Max Welling traces the thread connecting quantum gravity, equivariant neural networks, diffusion models, and climate-focused materials discovery (yes, there is one!!!).

We begin with a provocative framing: experiments as computation. Welling describes the idea of a “physics processing unit”—a world in which digital models and physical experiments work tog...

Claude Code for Finance + The Global Memory Shortage: Doug O'Laughlin, SemiAnalysis

This is a free preview of a paid episode. To hear more, visit www.latent.space

First speakers for AIE Europe and AIEi Miami have been announced. If you’re in Asia/Aus, come by Singapore and Melbourne. AI Engineering is going global!

One year ago today, Anthropic launched Claude Code, to not much fanfare:

The word of mouth was incredibly strong however, and so we were glad to be one of the first podcasts to invite Boris and Cat on in early May:

As we discussed on the po...

⚡️The End of SWE-Bench Verified — Mia Glaese & Olivia Watkins, OpenAI Frontier Evals & Human Data

Olivia Watkins (Frontier Evals team) and Mia Glaese (VP of Research at OpenAI, leading the Codex, human data, and alignment teams) discuss a new blog post (https://openai.com/index/why-we-no-longer-evaluate-swe-bench-verified/) arguing that SWE-Bench Verified—long treated as a key “North Star” coding benchmark—has become saturated and highly contaminated, making it less useful for measuring real coding progress. SWE-Bench Verified originated as a major OpenAI-led cleanup of the original Princeton SWE-Bench benchmark, including a large human review effort with nearly 100 software engineers and multiple independent reviews to curate ~500 higher-quality tasks. But recent findings show that many remaining failures can refl...

Bitter Lessons in Venture vs Growth: Anthropic vs OpenAI, Noam Shazeer, World Labs, Thinking Machines, Cursor, ASIC Economics — Martin Casado & Sarah Wang of a16z

Tickets for AIEi Miami and AIE Europe are live, with first wave speakers announced!

From pioneering software-defined networking to backing many of the most aggressive AI model companies of this cycle, Martin Casado and Sarah Wang sit at the center of the capital, compute, and talent arms race reshaping the tech industry. As partners at a16z investing across infrastructure and growth, they’ve watched venture and growth blur, model labs turn dollars into capability at unprecedented speed, and startups raise nine-figure rounds before monetization.Martin and Sarah join us to unpack the new financing playbook for AI...

Owning the AI Pareto Frontier — Jeff Dean

From rewriting Google’s search stack in the early 2000s to reviving sparse trillion-parameter models and co-designing TPUs with frontier ML research, Jeff Dean has quietly shaped nearly every layer of the modern AI stack. As Chief AI Scientist at Google and a driving force behind Gemini, Jeff has lived through multiple scaling revolutions from CPUs and sharded indices to multimodal models that reason across text, video, and code.

Jeff joins us to unpack what it really means to “own the Pareto frontier,” why distillation is the engine behind every Flash model breakthrough, how energy (in picojoules) not FL...

🔬Beyond AlphaFold: How Boltz is Open-Sourcing the Future of Drug Discovery

This podcast features Gabriele Corso and Jeremy Wohlwend, co-founders of Boltz and authors of the Boltz Manifesto, discussing the rapid evolution of structural biology models from AlphaFold to their own open-source suite, Boltz-1 and Boltz-2. The central thesis is that while single-chain protein structure prediction is largely “solved” through evolutionary hints, the next frontier lies in modeling complex interactions (protein-ligand, protein-protein) and generative protein design, which Boltz aims to democratize via open-source foundations and scalable infrastructure.

Full Video Pod

On YouTube!

Timestamps

* 00:00 Introduction to Benchmarking and the “Solved...

The First Mechanistic Interpretability Frontier Lab — Myra Deng & Mark Bissell of Goodfire AI

From Palantir and Two Sigma to building Goodfire into the poster-child for actionable mechanistic interpretability, Mark Bissell (Member of Technical Staff) and Myra Deng (Head of Product) are trying to turn “peeking inside the model” into a repeatable production workflow by shipping APIs, landing real enterprise deployments, and now scaling the bet with a recent $150M Series B funding round at a $1.25B valuation.

In this episode, we go far beyond the usual “SAEs are cool” take. We talk about Goodfire’s core bet: that the AI lifecycle is still fundamentally broken because the only reliable control we have is...

🔬 Automating Science: World Models, Scientific Taste, Agent Loops — Andrew White

Editor’s note: Welcome to our new AI for Science pod, with your new hosts RJ and Brandon! See the writeup on Latent.Space for more details on why we’re launching 2 new pods this year. RJ Honicky is a co-founder and CTO at MiraOmics (https://miraomics.bio/), building AI models and services for single cell, spatial transcriptomics and pathology slide analysis. Brandon Anderson builds AI systems for RNA drug discovery at Atomic AI (https://atomic.ai). Anything said on this podcast is his personal take — not Atomic’s.

—-

From building molecular dynamics simulations at the Uni...

🔬 Automating Science: World Models, Scientific Taste, Agent Loops — Andrew White

Editor’s note: Welcome to our new AI for Science pod, with your new hosts RJ and Brandon! See the writeup on Latent.Space (https://Latent.Space) for more details on why we’re launching 2 new pods this year. RJ Honicky is a co-founder and CTO at MiraOmics (https://miraomics.bio/), building AI models and services for single cell, spatial transcriptomics and pathology slide analysis. Brandon Anderson builds AI systems for RNA drug discovery at Atomic AI (https://atomic.ai). Anything said on this podcast is his personal take — not Atomic’s.—From building molecular dynamics simulations at the University...

⚡️ Prism: OpenAI's LaTeX "Cursor for Scientists" — Kevin Weil & Victor Powell, OpenAI for Science

From building Crixet in stealth (so stealthy Kevin had to hunt down Victor on Reddit to explore an acquisition) to launching Prism (https://openai.com/prism/) as OpenAI's free AI-native LaTeX editor, Kevin Weil (VP of OpenAI for Science) and Victor Powell (Product Lead on Prism) are embedding frontier reasoning models like GPT 5.2 directly into the scientific publishing workflow—turning weeks of LaTeX wrestling into minutes of natural language instruction, and accelerating the path from research breakthrough to published paper.

We discuss:

What Prism is: a free AI-native LaTeX editor with GPT-5.2 embedded directly into th...

Captaining IMO Gold, Deep Think, On-Policy RL, Feeling the AGI in Singapore — Yi Tay

From shipping Gemini Deep Think and IMO Gold to launching the Reasoning and AGI team in Singapore, Yi Tay has spent the last 18 months living through the full arc of Google DeepMind’s pivot from architecture research to RL-driven reasoning—watching his team go from a dozen researchers to 300+, training models that solve International Math Olympiad problems in a live competition, and building the infrastructure to scale deep thinking across every domain, and driving Gemini to the top of the leaderboards across every category. Yi Returns to dig into the inside story of the IMO effort and more!

We...

Captaining IMO Gold, Deep Think, On-Policy RL, Feeling the AGI in Singapore — Yi Tay

From shipping Gemini Deep Think and IMO Gold to launching the Reasoning and AGI team in Singapore, Yi Tay has spent the last 18 months living through the full arc of Google DeepMind's pivot from architecture research to RL-driven reasoning—watching his team go from a dozen researchers to 300+, training models that solve International Math Olympiad problems in a live competition, and building the infrastructure to scale deep thinking across every domain, and driving Gemini to the top of the leaderboards across every category. Yi Returns to dig into the inside story of the IMO effort and more!

We...

Brex’s AI Hail Mary — With CTO James Reggio

From building internal AI labs to becoming CTO of Brex, James Reggio has helped lead one of the most disciplined AI transformations inside a real financial institution where compliance, auditability, and customer trust actually matter.

We sat down with Reggio to unpack Brex’s three-pillar AI strategy (corporate, operational, and product AI) [https://www.brex.com/journal/brex-ai-native-operations], how SOP-driven agents beat overengineered RL in ops, why Brex lets employees “build their own AI stack” instead of picking winners [https://www.conductorone.com/customers/brex/], and how a small, founder-heavy AI team is shipping production agents to 40,000+ compan...

Brex’s AI Hail Mary — With CTO James Reggio

From building internal AI labs to becoming CTO of Brex, James Reggio has helped lead one of the most disciplined AI transformations inside a real financial institution where compliance, auditability, and customer trust actually matter.

We sat down with Reggio to unpack Brex’s three-pillar AI strategy (corporate, operational, and product AI) [https://www.brex.com/journal/brex-ai-native-operations], how SOP-driven agents beat overengineered RL in ops, why Brex lets employees “build their own AI stack” instead of picking winners [https://www.conductorone.com/customers/brex/], and how a small, founder-heavy AI team is shipping production agents to 40,000+ compan...

Artificial Analysis: The Independent LLM Analysis House — with George Cameron and Micah Hill-Smith

don’t miss George’s AIE talk: https://www.youtube.com/watch?v=sRpqPgKeXNk

—-

From launching a side project in a Sydney basement to becoming the independent gold standard for AI benchmarking—trusted by developers, enterprises, and every major lab to navigate the exploding landscape of models, providers, and capabilities—George Cameron and Micah Hill-Smith have spent two years building Artificial Analysis into the platform that answers the questions no one else will: Which model is actually best for your use case? What are the real speed-cost trade-offs? And how open is "open" really?

We d...

Artificial Analysis: The Independent LLM Analysis House — with George Cameron and Micah-Hill Smith

don’t miss George’s AIE talk: https://www.youtube.com/watch?v=sRpqPgKeXNk

—-

From launching a side project in a Sydney basement to becoming the independent gold standard for AI benchmarking—trusted by developers, enterprises, and every major lab to navigate the exploding landscape of models, providers, and capabilities—George Cameron and Micah-Hill Smith have spent two years building Artificial Analysis into the platform that answers the questions no one else will: Which model is actually best for your use case? What are the real speed-cost trade-offs? And how open is "open" really?

We d...

Artificial Analysis: Independent LLM Evals as a Service — with George Cameron and Micah-Hill Smith

Happy New Year! You may have noticed that in 2025 we had moved toward YouTube as our primary podcasting platform. As we’ll explain in the next State of Latent Space post, we’ll be doubling down on Substack again and improving the experience for the over 100,000 of you who look out for our emails and website updates!

We first mentioned Artificial Analysis in 2024, when it was still a side project in a Sydney basement. They then were one of the few Nat Friedman and Daniel Gross’ AIGrant companies to raise a full seed round from them and have n...

[State of Evals] LMArena's $1.7B Vision — Anastasios Angelopoulos, LMArena

We are reupping this episode after LMArena announced their fresh Series A (https://www.theinformation.com/articles/ai-evaluation-startup-lmarena-valued-1-7-billion-new-funding-round?rc=luxwz4), raising $150m at a $1.7B valuation, with $30M annualized consumption revenue (aka $2.5m MRR) after their September evals product launch.

—-

From building LMArena in a Berkeley basement to raising $100M and becoming the de facto leaderboard for frontier AI, Anastasios Angelopoulos returns to Latent Space to recap 2025 in one of the most influential platforms in AI—trusted by millions of users, every major lab, and the entire industry to answer one question: whic...

[State of Evals] LMArena's $1.7B Vision — Anastasios Angelopoulos, LMArena

We are reupping this episode after LMArena announced their fresh Series A (https://www.theinformation.com/articles/ai-evaluation-startup-lmarena-valued-1-7-billion-new-funding-round?rc=luxwz4), raising $150m at a $1.7B valuation, with $30M annualized consumption revenue (aka $2.5m MRR) after their September evals product launch.

—-

From building LMArena in a Berkeley basement to raising $100M and becoming the de facto leaderboard for frontier AI, Anastasios Angelopoulos returns to Latent Space to recap 2025 in one of the most influential platforms in AI—trusted by millions of users, every major lab, and the entire industry to answer one question: whic...

[NeurIPS Best Paper] 1000 Layer Networks for Self-Supervised RL — Kevin Wang et al, Princeton

From undergraduate research seminars at Princeton to winning Best Paper award at NeurIPS 2025, Kevin Wang, Ishaan Javali, Michał Bortkiewicz, Tomasz Trzcinski, Benjamin Eysenbach defied conventional wisdom by scaling reinforcement learning networks to 1,000 layers deep—unlocking performance gains that the RL community thought impossible. We caught up with the team live at NeurIPS to dig into the story behind RL1000: why deep networks have worked in language and vision but failed in RL for over a decade (spoiler: it’s not just about depth, it’s about the objective), how they discovered that self-supervised RL (learning representations of states, actions, and fut...

[NeurIPS Best Paper] 1000 Layer Networks for Self-Supervised RL — Kevin Wang et al, Princeton

From undergraduate research seminars at Princeton to winning Best Paper award at NeurIPS 2025, Kevin Wang, Ishaan Javali, Michał Bortkiewicz, Tomasz Trzcinski, Benjamin Eysenbach defied conventional wisdom by scaling reinforcement learning networks to 1,000 layers deep—unlocking performance gains that the RL community thought impossible. We caught up with the team live at NeurIPS to dig into the story behind RL1000: why deep networks have worked in language and vision but failed in RL for over a decade (spoiler: it's not just about depth, it's about the objective), how they discovered that self-supervised RL (learning representations of states, actions, and future sta...

[State of Code Evals] After SWE-bench, Code Clash & SOTA Coding Benchmarks recap — John Yang

From creating SWE-bench in a Princeton basement to shipping CodeClash, SWE-bench Multimodal, and SWE-bench Multilingual, John Yang has spent the last year and a half watching his benchmark become the de facto standard for evaluating AI coding agents—trusted by Cognition (Devin), OpenAI, Anthropic, and every major lab racing to solve software engineering at scale. We caught up with John live at NeurIPS 2025 to dig into the state of code evals heading into 2026: why SWE-bench went from ignored (October 2023) to the industry standard after Devin's launch (and how Walden emailed him two weeks before the big reveal), how the be...

[State of Code Evals] After SWE-bench, Code Clash & SOTA Coding Benchmarks recap — John Yang

From creating SWE-bench in a Princeton basement to shipping CodeClash, SWE-bench Multimodal, and SWE-bench Multilingual, John Yang has spent the last year and a half watching his benchmark become the de facto standard for evaluating AI coding agents—trusted by Cognition (Devin), OpenAI, Anthropic, and every major lab racing to solve software engineering at scale. We caught up with John live at NeurIPS 2025 to dig into the state of code evals heading into 2026: why SWE-bench went from ignored (October 2023) to the industry standard after Devin’s launch (and how Walden emailed him two weeks before the big reveal), how the...

[State of Post-Training] From GPT-4.1 to 5.1: RLVR, Agent & Token Efficiency — Josh McGrath, OpenAI

From pre-training data curation to shipping GPT-4o, o1, o3, and now GPT-5 thinking and the shopping model, Josh McGrath has lived through the full arc of OpenAI’s post-training evolution—from the PPO vs DPO debates of 2023 to today’s RLVR era, where the real innovation isn’t optimization methods but data quality, signal trust, and token efficiency. We sat down with Josh at NeurIPS 2025 to dig into the state of post-training heading into 2026: why RLHF and RLVR are both just policy gradient methods (the difference is the input data, not the math), how GRPO from DeepSeek Math was unde...

[State of Post-Training] From GPT-4.1 to 5.1: RLVR, Agent & Token Efficiency — Josh McGrath, OpenAI

From pre-training data curation to shipping GPT-4o, o1, o3, and now GPT-5 thinking and the shopping model, Josh McGrath has lived through the full arc of OpenAI's post-training evolution—from the PPO vs DPO debates of 2023 to today's RLVR era, where the real innovation isn't optimization methods but data quality, signal trust, and token efficiency. We sat down with Josh at NeurIPS 2025 to dig into the state of post-training heading into 2026: why RLHF and RLVR are both just policy gradient methods (the difference is the input data, not the math), how GRPO from DeepSeek Math was underappreciated as a...

[State of RL/Reasoning] IMO/IOI Gold, OpenAI o3/GPT-5, and Cursor Composer — Ashvin Nair, Cursor

From Berkeley robotics and OpenAI’s 2017 Dota-era internship to shipping RL breakthroughs on GPT-4o, o1, and o3, and now leading model development at Cursor, Ashvin Nair has done it all. We caught up with Ashvin at NeurIPS 2025 to dig into the inside story of OpenAI’s reasoning team (spoiler: it went from a dozen people to 300+), why IOI Gold felt reachable in 2022 but somehow didn’t change the world when o1 actually achieved it, how RL doesn’t generalize beyond the training distribution (and why that means you need to bring economically useful tasks into distribution by co-designing products...

[State of RL/Reasoning] IMO/IOI Gold, OpenAI o3/GPT-5, and Cursor Composer — Ashvin Nair, Cursor

From Berkeley robotics and OpenAI's 2017 Dota-era internship to shipping RL breakthroughs on GPT-4o, o1, and o3, and now leading model development at Cursor, Ashvin Nair has done it all. We caught up with Ashvin at NeurIPS 2025 to dig into the inside story of OpenAI's reasoning team (spoiler: it went from a dozen people to 300+), why IOI Gold felt reachable in 2022 but somehow didn't change the world when o1 actually achieved it, how RL doesn't generalize beyond the training distribution (and why that means you need to bring economically useful tasks into distribution by co-designing products and models), the...